Recently, Amazon unveiled its wearable band Halo which generated a lot of internet chatter, distinctive memes, and many raised eyebrows. This response was primarily due to two major feature offerings of the Halo band.

The first feature that stuck out like a sore thumb for many was the body image analysis where you click an image of yourself and the system analyzes your image and provides you with body composition metrics.

The second feature and the subject of this article is the voice sentiment analysis that detected the tone of a user’s voice and provided feedback or as Amazon pitches it..

Maintain Relationship Health with the tone of voice analysis

And you thought Amazon and relationship advice could never be in the same sentence in your wildest dreams.

Keeping the privacy-related issues aside for a moment, let’s take a look under the hoods of this unique feature offering from Amazon.

UPDATE: Amazon no longer supports Halo products, including the Halo Band, Halo View, and Halo Rise. Beginning on August 1, 2023, these products and the Amazon Halo app won’t work.

Contents

- 0.1 Related reading:

- 0.2 What is the basic value offering around Halo’s Voice Tone Analysis?

- 0.3 How does Amazon Voice Tone analysis work behind the scenes?

- 0.4 From Raw Voice Data to generating Inferences

- 0.5 Sentiment Evaluation using pace and pitch of Voice

- 0.6 When does the Amazon Halo system do the sentiment analysis?

- 0.7 How does the Tone Analysis feature work on the app?

- 0.8 Blood Pressure, Blood Glucose, and EDA Sensors on Future Amazon Halo?

- 0.9 Privacy Issues with Amazon Halo Voice Tone and Sentiment analysis

- 1 In Summary,

Related reading:

- Amazon Halo – Easy to set up offering a variety of fitness features for a wide audience

- Amazon launches Fitness Band Halo with premium subscription features

- Amazon starts notifying early access users for its fitness band Halo

- Amazon Alexa as a Self-Hypnosis tool for personalized health management

- Verily researching a new finger wearable continuous blood pressure monitor

What is the basic value offering around Halo’s Voice Tone Analysis?

In any conversation, the tone of the voice along with the body language of the speaker offers cues to listeners. A speaker may not be aware of the emotional state that may be perceived by others as conveyed by their speech.

It’s very much possible that your speech sounds irritable to others on days following a restless night or your voice patterns change dramatically due to some stress you might be experiencing.

Now, if a system can be modeled to help you analyze how you sound to others and more importantly the factors that might be driving the change in your tonal quality, you could perhaps take steps to be more aware of these factors and make appropriate adjustments.

A person’s wellbeing and emotional state are interrelated

A poor emotional state can directly impact a person’s health, just as an illness or other health event may impact a person’s emotional state.

A person’s emotional state may also impact others that they communicate with. For example, a person who speaks with someone in an angry tone may produce in that listener an anxious emotional response.

Therefore, there is much value in this feature offering if it is done correctly.

How does Amazon Voice Tone analysis work behind the scenes?

Amazon Halo has already started shipping out to early access customers. Before we try out the feature, we wanted to understand the science behind it. To that end, we were able to locate the details of the feature via a recently approved Amazon patent (Approved on Sept 24), titled as “SYSTEM FOR ASSESSING VOCAL PRESENTATION”

From Raw Voice Data to generating Inferences

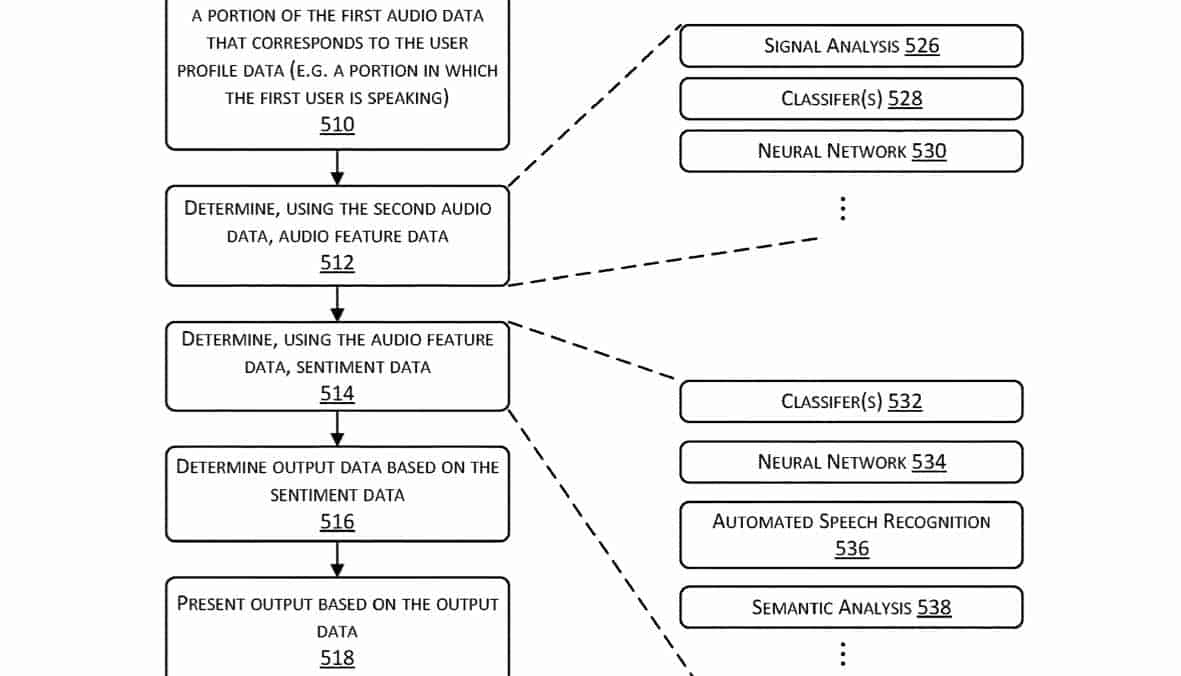

Raw audio as acquired from one or more microphones on the Halo wearable is processed to provide audio data that is associated with a particular user.

This audio data is then processed to determine audio feature data. Next, the audio feature data is processed by a convolutional neural network to generate feature vectors representative of the audio data and changes in the audio data. The audio feature data is then processed to determine sentiment data for that particular user.

The signal processing is just not limited to analyzing the raw audio via a neural network but also it is co-related with additional sensor data to infer likely factors that might be causing the change in the tone.

For example, the system would look at how much exercise you did the previous day, how much did you sleep or changes in heart rate data, and try to co-relate these metrics with the changes in voice sentiment data.

Sentiment Evaluation using pace and pitch of Voice

The AI processing system that processes the raw voice data is looking for distinct changes over time in pitch, pace, and tone that might be indicative of various emotional states.

For example, the emotional state of speech that is described as “excited” may correspond to speech which has a greater pace while slower-paced speech is described as “bored”.

In another example, an increase in average pitch may be indicative of an emotional state of “angry” while an average pitch that is close to a baseline value may be indicative of an emotional state of “calm”.

Check out the references at the bottom of the article particularly the work done by Viktor Rozgic in Emotion recognition using acoustic and lexical features for more detailed information.

When does the Amazon Halo system do the sentiment analysis?

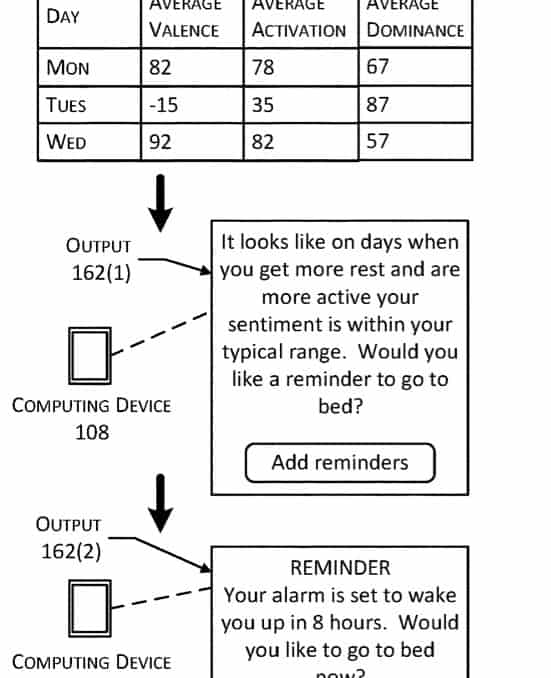

The output may be indicative of sentiment data over various spans of time, such as the past few minutes, during the last scheduled appointment, during the past day, and so forth.

This implies that users who are looking for feedback could pick a particular prior meeting or conversation from that day and get some feedback around how they sounded.

A real-time feedback system could also be used to alert the user when the sound of their speech indicates they are in an excitable state, giving them the opportunity to calm down.

Amazon also talks about the concept of “advisories” in this white paper.

According to the patent. the system may use the sentiment data and the user status data to provide advisories.

For example, the user status data may include information such as hours of sleep, heart rate, number of steps taken, and so forth.

The sentiment data and sensor data acquired over past several days may be analyzed and used to determine that when the user status data indicates a night with greater than 7 hours of rest, the following day the sentiment data indicates the user is more agreeable and less irritable.

The user may then be provided with output in a user interface that is advisory, suggesting the user is ready to conquer the world : ) or if the sleep was less than a few hours an appropriate advisory is provided suggesting more rest for the user.

These advisories are designed to help a user regulate their activity, provide feedback to make healthy lifestyle changes, and maximize the quality of their health.

How does the Tone Analysis feature work on the app?

MHA team decided to take the Amazon Halo Tone analysis feature for a detailed spin. The initial setup of the tone analysis to actually using it was a breeze. Amazon has done a remarkably good job with the navigation screens in Halo. The step-by-step setup screens were super intuitive.

Ability to track voice sentiment on a real-time basis with very low latency was a neat experience.

Most importantly, for those who have issues around Privacy, Amazon has donne a good job in not only bringing forth the transparency around this feature but also provided quick and easy tools that make deleting any specific conversation or all conversations real easy and straight forward.

Blood Pressure, Blood Glucose, and EDA Sensors on Future Amazon Halo?

Based on reading this paper, it is clear that Amazon is exploring or looking at additional sensors for its Halo wearable. Here are three key sensor unit technologies that stood out for us.

- Blood Pressure Sensor – A blood pressure sensor ( Fig 126.2) could be embedded in the future version of the wearable band that consists of a camera that can acquire images of blood vessels and determine the blood pressure by analyzing the changes in the diameter of the blood vessels over time. They are also evaluating a blood pressure sensor design that comprises a sensor transducer that is in contact with the skin of the user proximate to a blood vessel.

- Blood Glucose Sensor – A glucose sensor ( Fig 126.13) may be used to determine a concentration of glucose within the blood or tissues of the user. The glucose sensor 126(13) will consist of a near-infrared spectroscope that determines a concentration of glucose or glucose metabolites in tissues. An alternative design approach could comprise a chemical detector that measures the presence of glucose or glucose metabolites at the surface of the user’s skin.

- Pulse Oximeter – A pulse oximeter (Fig 126.3) may be configured to provide sensor data that is indicative of a cardiac pulse rate and data indicative of oxygen saturation of the user’s 102 blood.

Privacy Issues with Amazon Halo Voice Tone and Sentiment analysis

Both the body image composition feature, as well as the voice tonal analysis feature, are optional for Halo users. You can choose to use it or totally opt-out.

Based on reading this paper, it appears to us that Amazon has taken some steps to hash out the privacy-related issues.

For example, when your raw voice data is captured and processed for tone and sentiment analysis, the system discards audio data that is not associated with the particular user and generates the audio feature data from the audio data that is associated with the particular user.

After the audio feature data is generated, the audio data of the particular user is discarded. Based on the design, it appears that Amazon would only keep the feature data ( or metadata of the voice) and discard the actual voice sample.

Quite frankly, it is not very clear from Amazon’s product collateral as to how it is handling the privacy and sensitivity issues beyond what we can find from this paper. Given the pushback that we have seen from the interested user community around privacy, it will be great if the company can provide additional details around its plans for user anonymity and privacy-related issues.

In Summary,

Although there are outstanding issues centered around the privacy and sensitivity design of these features, we have to recognize the fact that Amazon is able to draw upon its AI processing and engineering capabilities to put forth some very innovative ideas when it comes to wearable use cases.

Additionally, given that the team is thinking around the new high end / first to market sensors such as those for blood glucose monitoring and blood pressure monitoring, it has the potential to become a serious competitor to existing wearable players in the near future.

References:

1.https://www.isca-speech.org/archive/sp2002/sp02_423.html

2.https://hal.archives-ouvertes.fr/hal-00499173/document

4. Amazon Halo Tonal Analysis patent (Patent # US20200302952)