Mixed reality maker Magic Leap was approved for a patent today that discusses how the company’s wearable system can be used for interpreting sign language. This new patent builds upon the existing magic leap wearable spatial computer by enhancing accessibility options.

Related:

- What is Apple’s new Spatial Audio feature? Will it enhance future A/R and V/R use cases

- Apple discloses a Virtual Reality System for vehicles that could add to its services revenue

- Google Plan of a Future Bathroom Includes Automatic Health Monitoring

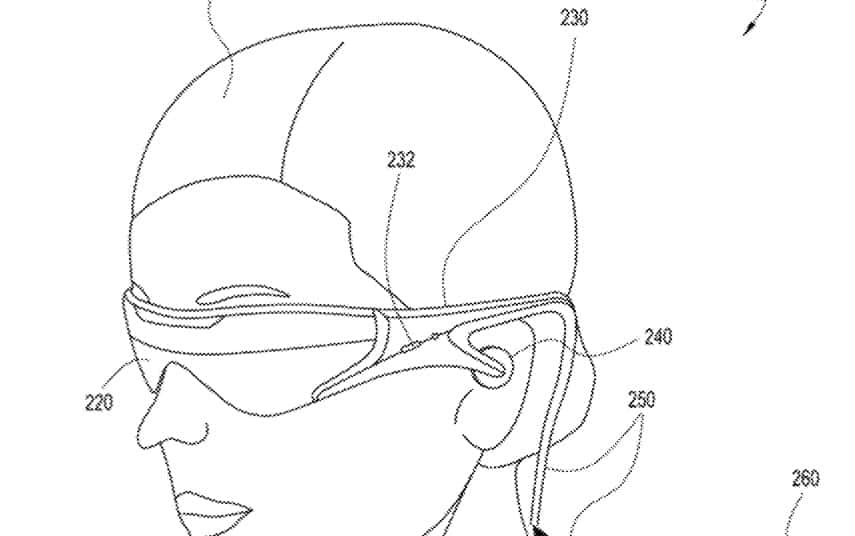

The invention is for a sensory eyewear system for a mixed reality device that can facilitate user’s interactions with the other people or with the environment. As one example, the sensory eyewear system can recognize and interpret a sign language and present the translated information to a user of the mixed reality device.

The wearable system can also recognize text in the user’s environment, modify the text (e.g., by changing the content or display characteristics of the text), and render the modified text to occlude the original text.

These embodiments advantageously may permit greater interaction among differently-abled persons.

A sign language primarily uses visual gestures (e.g., hand shapes; hand orientations; hand, arm, or body movements; or facial expressions) to communicate. There are hundreds of sign languages used around the world. Some sign languages may be used more often than others. For example, American sign language (ASL) is widely used in the U.S. and Canada.

The basic idea is that many people do know or cannot interpret sign languages.

A speech- or hearing-challenged person and a conversation partner may not be familiar with the same sign language. This can impede conversation with the hearing-challenged or the speech-challenged persons.

Accordingly, the wearable system that can image signs (e.g., gestures) being made by a conversation partner, convert the signs to text or graphics (e.g., graphics of sign language gestures in a sign language the system user understands), and then display information associated with the sign (e.g., a translation of the signs into a language understood by the user) would greatly help improve the communication between the user and the conversation partner.

Magic Leap’s plan is to utilize the outward-facing camera on the HMD (Head-mounted device) to capture the images of gestures being made, identify signs from these gestures, translate the signs and display it to the user. A machine learning algorithm is used for the detection and translation process.

The US Patent, 10,769,858 was filed on Feb 26,2020, and was approved today (Sept 8,2020).

The inventors on this accessibility enhancing wearable are Eric Browy, Michael Janusz Woods and Andrew Rabinovich. Andrew is currently the co-founder and CTO at InsideIQ. Eric used to be the Lead Optical Systems and prototype engineer and now leads the ‘Pathfinding’ team at Magic Leap.