Apple introduced a feature called Spatial Audio. Available as a setting on both your iPhone and iPad, this feature has been a source of some extensive debates among developers around the functionality and the direction.

As one user explained, “Spatial Audio works by using the iPhone’s or iPad’s and the AirPods Gyroscope to realize when you turn your head around and make it still seem like the sound is coming from the device itself so basically the audio shifts around you when you turn your head.”

Others explain that Spatial audio is sort of a simulated surround sound.

Contents

Related:

- Apple discloses a Virtual Reality System for vehicles that could add to its services revenue

- Apple exploring Touchsenstive Smart Fabric for future input devices

- Apple introduces new Mobility Metrics and other key HealthKit data with iOS 14 and watchOS 7

- Spatial Audio not working on Apple AirPods or Beats? Steps to fix it

Is Apple’s spatial audio feature merely a surround sound type feature or is there more aspiration built into it?

Based on some digging around Apple’s recent tech filings, it is becoming clear that Apple’s aspirations around the new Spatial Audio feature are more than a simple surround sound design.

It is in fact a well-thought-out value proposition to enhance accessibility features on Apple devices. A recent filing approved showcases some of the thinking behind this feature.

According to the invention, this could be used in a spatial audio navigation system that provides navigational information in audio form to direct users to target locations.

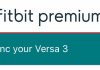

The system is designed to use the directionality of audio played through a binaural audio device to provide navigational cues to the user. A current location, target location, and map information may be input to pathfinding algorithms to determine a real-world path between the user’s current location and the target location.

The system will use directional audio played through Airpods to guide the user on the path from the current location to the target location.

Spatial Audio’s connection to Apple Smart Glasses and A/R or V/R

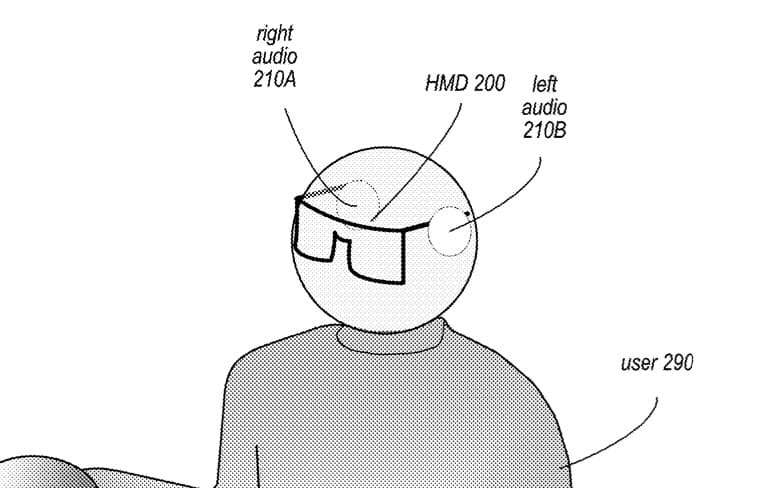

According to the patent, spatial audio is also being designed to be used in V/R and A/R systems while the users interact with the immersive artificial environment.

Some of the Apple engineers involved in this technology filing such as Bruno Sommers appear to have been a part of Apple’s AR/VR platform development. Other inventor named in the patent, Rahul Nair is currently the prototyping engineer with the ARKit group at Apple.

“embodiments of a spatial audio navigation system may also be implemented in VR/MR systems implemented as head-mounted displays (aka Apple Smart glasses) that include location technology, head orientation technology, and binaural audio output; speakers integrated with the HMD may be used as the binaural audio device, or alternatively an external headset may be used as the binaural audio device. “

Broadly the idea is to implement the functionality in any device or system that includes binaural audio output and that provides head motion and orientation tracking.

Apple also suggests the use of a spatial navigation system while users are using their automobiles.

The spatial audio system in this case will use the vehicle’s “surround” speaker system as the binaural audio device to output the directional sounds to guide the user while driving to a target location.

In either case, we should soon see the beginnings of spatial audio features as iOS 14 develops over these next few weeks, getting ready to be released this September.